From Chatbots to Agents: Building AI Systems That Take Action

AI agents are the most overhyped and simultaneously most underestimated trend in tech right now. After building several agent systems, here's what actually works — and what doesn't.

From Chatbots to Agents: Building AI Systems That Take Action

Six months ago, every startup pitch deck included the word "agent." Today, we're starting to see which agent architectures actually work in production and which were just demos dressed up for Twitter. Having spent the last few months building agent systems for both personal projects and exploratory work, I've developed strong opinions about what separates a useful AI agent from a glorified chatbot.

Defining the Spectrum

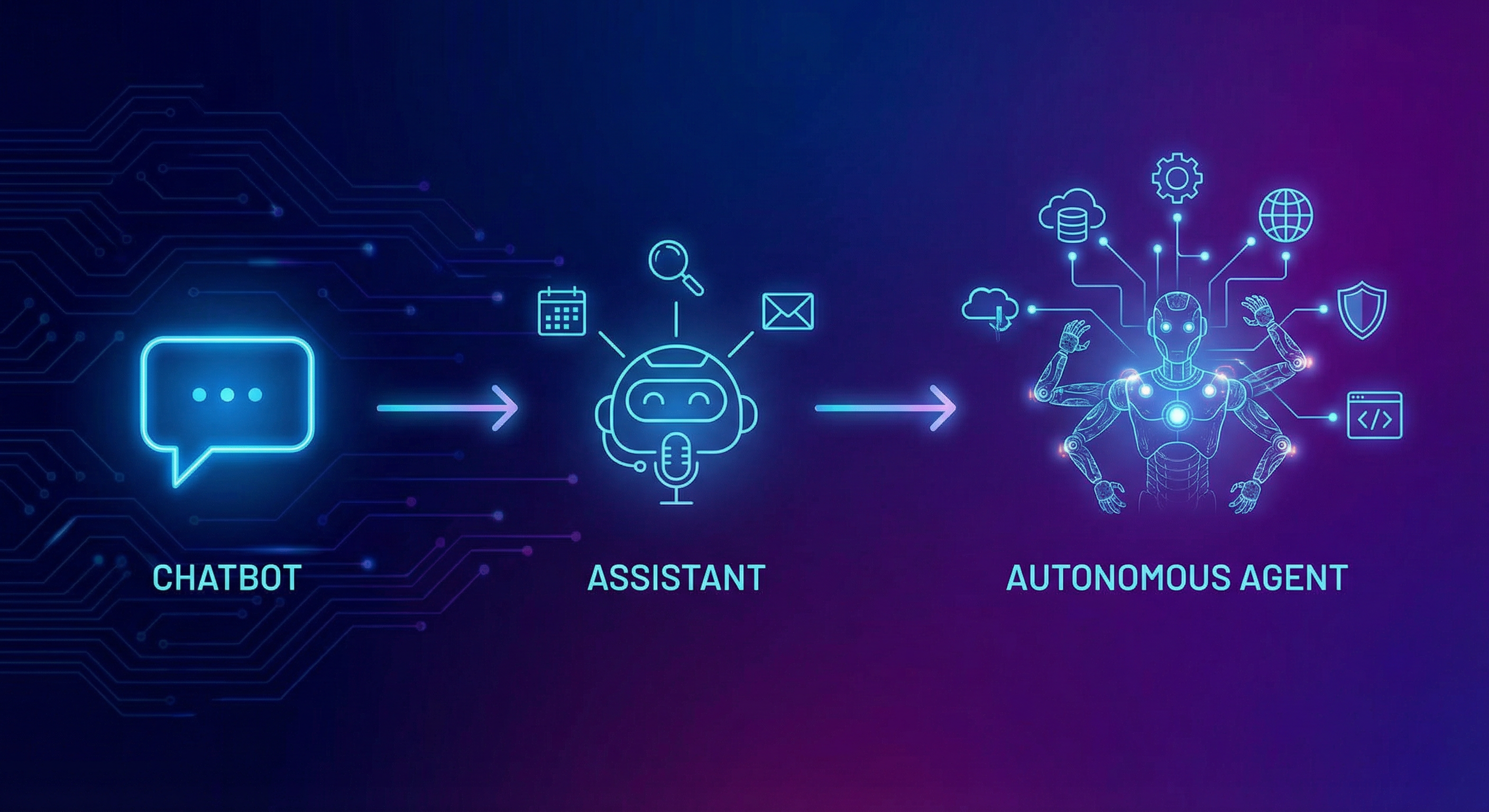

Not everything that calls itself an "agent" is one. It helps to think of AI systems on a spectrum:

Chatbots respond to messages. They're stateless (or nearly so), take a prompt, return a response. This is what most people interact with when they use ChatGPT or Claude in their default modes.

Assistants maintain context and can use tools. They remember your conversation, can search the web, read files, or call APIs. Cursor's AI features live here — the model understands your codebase and can make edits.

Agents operate autonomously toward a goal. They plan, execute, observe results, adapt, and keep going until the task is complete or they determine they can't proceed. The key difference is the loop: agents decide their own next action based on the outcome of the previous one.

What I've Learned Building Agents

1. The Planning Step Is Everything

The single biggest predictor of agent success is whether it plans before acting. An agent that jumps straight into executing tool calls will thrash — making changes, reverting them, trying something else, and eventually running out of context or budget.

The pattern that works: force a planning phase where the agent outlines its approach, identifies potential failure modes, and commits to a strategy before executing anything. This mirrors how experienced developers work — you don't start coding a complex feature without at least a mental model of the approach.

2. Tool Design Matters More Than Model Choice

I've been surprised by how much tool design affects agent performance, often more than which underlying model you use. A well-designed tool with clear descriptions, constrained inputs, and informative error messages will outperform a more powerful model working with poorly designed tools.

// Bad: Vague tool that invites misuse

{

name: "run_command",

description: "Run a shell command",

input: { command: "string" }

}

// Good: Specific, constrained, informative

{

name: "search_codebase",

description: "Search for files matching a pattern. Returns file paths sorted by relevance. Use when you need to find where something is defined or used.",

input: {

query: "string — the search term or regex pattern",

file_type: "string — optional file extension filter (e.g., '.ts', '.py')",

max_results: "number — defaults to 10"

}

}

3. Failure Recovery Is the Hard Part

Demos show agents succeeding. Production requires agents that handle failure gracefully. The agent that encounters an API rate limit, a malformed response, or an unexpected state and recovers without human intervention — that's the hard engineering problem.

I've found that the most robust pattern is to give agents explicit "I'm stuck" affordances. Rather than letting them loop forever, build in mechanisms for the agent to escalate, ask for clarification, or gracefully degrade to a simpler approach.

4. Memory and Context Management Are Unsolved

As someone who built features at GitLab that dealt with large-scale data processing, I appreciate the complexity of context management. Current agents struggle with long-running tasks because they lose context. Conversation windows fill up, early decisions get forgotten, and the agent starts contradicting itself.

The approaches that partially work:

- Structured scratchpads where the agent maintains a summary of decisions made and current state

- Hierarchical agents where a planning agent delegates subtasks to execution agents with focused context

- Checkpointing where the agent's state is periodically serialized so it can be resumed

None of these are perfect. This is the area where I expect the most progress over the next year.

The Architecture That Works

After several iterations, the agent architecture I've settled on looks like this:

- Orchestrator: Receives the high-level goal, creates a plan, and manages the execution loop

- Specialists: Focused agents that handle specific domains (code changes, research, testing)

- Evaluator: Reviews outputs against the original goal and determines if the task is complete

- Memory layer: Maintains structured state across the execution loop

The key insight is separation of concerns. A single agent trying to plan, execute, and evaluate simultaneously performs worse than specialized agents coordinated by an orchestrator.

Where We Are vs. Where We're Going

Today's agents are genuinely useful for well-scoped, technical tasks: code refactoring, data analysis, content generation with specific constraints. They struggle with ambiguous goals, tasks requiring creativity or judgment, and anything that needs real-world interaction beyond API calls.

What excites me is the convergence of agents with tool protocols like MCP (which I wrote about last month). As the tool ecosystem standardizes, agents get access to a broader set of capabilities without custom integration work. The agent that can compose MCP tools dynamically — discovering available tools, selecting the right ones, and chaining them together — is going to be transformative.

We're in the "building the plumbing" phase. It's not glamorous, but it's essential. The teams that invest in robust agent infrastructure now — good tool design, reliable failure recovery, efficient context management — will be the ones shipping genuinely autonomous systems in 12-18 months.

If you're experimenting with agents, start small. Build an agent that handles one well-defined workflow end-to-end before trying to build a general-purpose autonomous system. The lessons from that single workflow will be worth more than any framework tutorial.